توزيع بواسون

دالة الكثافة الاحتمالية  | |

دالة التوزيع التراكمي  | |

| المؤشرات | |

| الدعم | |

| د.ك.ا | |

| د.ت.ت | |

| المتوسط | |

| وسيط | |

| المنوال | |

| تباين | |

| ميلان | |

| كورتوسيس | |

| الاعتلاج | |

| د.م.ع | |

| الدالة المميزة | |

في علمي الإحصاء و الاحتمالات، توزيع بواسون (Poisson) هو توزيع احتمالي منفصل يمثل النموذج التالي:

في مدّة زمنية T، يحصل الحدث بمعدل λ مرّات (λ أقل من 5 مثلا ) . لنرمز بX المتغير العشوائي الذي يمثل عدد المرّات التي سيحصل فيها الحدث في X . T يمكن أن يساوي 0، 1، 2...

يتبع هذا المتغير العشوائي القانون التالي:

مهما كان العدد الطبيعي k.

- λعدد حقيقي موجب

- ( p( k : احتمال حصول الحدث k في T.

هذا ما يدعى توزيع بواسون (أو قانون بواسون) ذا المعلمة λ.

تاريخ

The distribution was first introduced by Siméon Denis Poisson (1781–1840) and published together with his probability theory in his work Recherches sur la probabilité des jugements en matière criminelle et en matière civile (1837).[1] The work theorized about the number of wrongful convictions in a given country by focusing on certain random variables N that count, among other things, the number of discrete occurrences (sometimes called "events" or "arrivals") that take place during a time-interval of given length. The result had already been given in 1711 by Abraham de Moivre in De Mensura Sortis seu; de Probabilitate Eventuum in Ludis a Casu Fortuito Pendentibus .[2][3][4][5] This makes it an example of Stigler's law and it has prompted some authors to argue that the Poisson distribution should bear the name of de Moivre.[6][7]

In 1860, Simon Newcomb fitted the Poisson distribution to the number of stars found in a unit of space.[8] A further practical application was made by Ladislaus Bortkiewicz in 1898. Bortkiewicz showed that the frequency with which soldiers in the Prussian army were accidentally killed by horse kicks could be well modeled by a Poisson distribution.[9].

التعريفات

Probability mass function

A discrete random variable X is said to have a Poisson distribution with parameter if it has a probability mass function given by:[10]

where

- k is the number of occurrences ()

- e is Euler's number ()

- k! = k(k–1) ··· (3)(2)(1) is the factorial.

The positive real number λ is equal to the expected value of X and also to its variance.[11]

The Poisson distribution can be applied to systems with a large number of possible events, each of which is rare. The number of such events that occur during a fixed time interval is, under the right circumstances, a random number with a Poisson distribution.

The equation can be adapted if, instead of the average number of events we are given the average rate at which events occur. Then and:[12]

Examples

The Poisson distribution may be useful to model events such as:

- the number of meteorites greater than 1-meter diameter that strike Earth in a year;

- the number of laser photons hitting a detector in a particular time interval;

- the number of students achieving a low and high mark in an exam; and

- locations of defects and dislocations in materials.

Examples of the occurrence of random points in space are: the locations of asteroid impacts with earth (2-dimensional), the locations of imperfections in a material (3-dimensional), and the locations of trees in a forest (2-dimensional).[13]

Assumptions and validity

The Poisson distribution is an appropriate model if the following assumptions are true:

- k, a nonnegative integer, is the number of times an event occurs in an interval.

- The occurrence of one event does not affect the probability of a second event.

- The average rate at which events occur is independent of any occurrences.

- Two events cannot occur at exactly the same instant.

If these conditions are true, then k is a Poisson random variable; the distribution of k is a Poisson distribution.

The Poisson distribution is also the limit of a binomial distribution, for which the probability of success for each trial equals λ divided by the number of trials, as the number of trials approaches infinity (see Related distributions).

Examples of probability for Poisson distributions

|

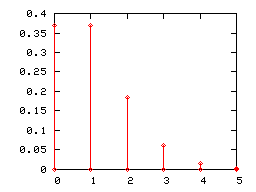

On a particular river, overflow floods occur once every 100 years on average. Calculate the probability of k = 0, 1, 2, 3, 4, 5, or 6 overflow floods in a 100-year interval, assuming the Poisson model is appropriate. Because the average event rate is one overflow flood per 100 years, λ = 1

|

The probability for 0 to 6 overflow floods in a 100-year period. |

|

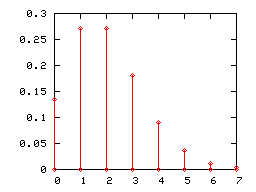

It has been reported that the average number of goals in a World Cup soccer match is approximately 2.5 and the Poisson model is appropriate.[14] Because the average event rate is 2.5 goals per match, λ = 2.5 .

|

The probability for 0 to 7 goals in a match. |

Once in an interval events: The special case of λ = 1 and k = 0

Suppose that astronomers estimate that large meteorites (above a certain size) hit the earth on average once every 100 years ( λ = 1 event per 100 years), and that the number of meteorite hits follows a Poisson distribution. What is the probability of k = 0 meteorite hits in the next 100 years?

Under these assumptions, the probability that no large meteorites hit the earth in the next 100 years is roughly 0.37. The remaining 1 − 0.37 = 0.63 is the probability of 1, 2, 3, or more large meteorite hits in the next 100 years. In an example above, an overflow flood occurred once every 100 years (λ = 1). The probability of no overflow floods in 100 years was roughly 0.37, by the same calculation.

In general, if an event occurs on average once per interval (λ = 1), and the events follow a Poisson distribution, then P(0 events in next interval) = 0.37. In addition, P(exactly one event in next interval) = 0.37, as shown in the table for overflow floods.

Examples that violate the Poisson assumptions

The number of students who arrive at the student union per minute will likely not follow a Poisson distribution, because the rate is not constant (low rate during class time, high rate between class times) and the arrivals of individual students are not independent (students tend to come in groups). The non-constant arrival rate may be modeled as a mixed Poisson distribution, and the arrival of groups rather than individual students as a compound Poisson process.

The number of magnitude 5 earthquakes per year in a country may not follow a Poisson distribution, if one large earthquake increases the probability of aftershocks of similar magnitude.

Examples in which at least one event is guaranteed are not Poisson distributed; but may be modeled using a zero-truncated Poisson distribution.

Count distributions in which the number of intervals with zero events is higher than predicted by a Poisson model may be modeled using a zero-inflated model.

Properties

Descriptive statistics

- The expected value of a Poisson random variable is λ.

- The variance of a Poisson random variable is also λ.

- The coefficient of variation is while the index of dispersion is 1.[5]

- The mean absolute deviation about the mean is[5]

- The mode of a Poisson-distributed random variable with non-integer λ is equal to which is the largest integer less than or equal to λ. This is also written as floor(λ). When λ is a positive integer, the modes are λ and λ − 1.

- All of the cumulants of the Poisson distribution are equal to the expected value λ. The n th factorial moment of the Poisson distribution is λ n .

- The expected value of a Poisson process is sometimes decomposed into the product of intensity and exposure (or more generally expressed as the integral of an "intensity function" over time or space, sometimes described as "exposure").[15]

Median

Bounds for the median () of the distribution are known and are sharp:[16]

Higher moments

The higher non-centered moments mk of the Poisson distribution are Touchard polynomials in λ:

where the braces { } denote Stirling numbers of the second kind.[17][18] In other words,

When the expected value is set to λ = 1, Dobinski's formula implies that the n‑th moment is equal to the number of partitions of a set of size n.

A simple upper bound is:[19]

Sums of Poisson-distributed random variables

If for are independent, then [20] A converse is Raikov's theorem, which says that if the sum of two independent random variables is Poisson-distributed, then so are each of those two independent random variables.[21][22]

Maximum entropy

It is a maximum-entropy distribution among the set of generalized binomial distributions with mean and ,[23] where a generalized binomial distribution is defined as a distribution of the sum of N independent but not identically distributed Bernoulli variables.

Other properties

- The Poisson distributions are infinitely divisible probability distributions.[24][5]

- The directed Kullback–Leibler divergence of from is given by

- If is an integer, then satisfies and [25][المصدر لا يؤكد ذلك]

- Bounds for the tail probabilities of a Poisson random variable can be derived using a Chernoff bound argument.[26]

- The upper tail probability can be tightened (by a factor of at least two) as follows:[27]

where

is the Kullback–Leibler divergence of

from

.

- Inequalities that relate the distribution function of a Poisson random variable to the Standard normal distribution function are as follows:[28] where is the Kullback–Leibler divergence of from and is the Kullback–Leibler divergence of from .

Poisson races

Let and be independent random variables, with then we have that

The upper bound is proved using a standard Chernoff bound.

The lower bound can be proved by noting that is the probability that where which is bounded below by where is relative entropy (See the entry on bounds on tails of binomial distributions for details). Further noting that and computing a lower bound on the unconditional probability gives the result. More details can be found in the appendix of Kamath et al.[29]

Related distributions

As a Binomial distribution with infinitesimal time-steps

The Poisson distribution can be derived as a limiting case to the binomial distribution as the number of trials goes to infinity and the expected number of successes remains fixed — see law of rare events below. Therefore, it can be used as an approximation of the binomial distribution if n is sufficiently large and p is sufficiently small. The Poisson distribution is a good approximation of the binomial distribution if n is at least 20 and p is smaller than or equal to 0.05, and an excellent approximation if n ≥ 100 and n p ≤ 10.[30] Letting

and

be the respective cumulative density functions of the binomial and Poisson distributions, one has:

One derivation of this uses probability-generating functions.[31] Consider a Bernoulli trial (coin-flip) whose probability of one success (or expected number of successes) is

within a given interval. Split the interval into n parts, and perform a trial in each subinterval with probability

. The probability of k successes out of n trials over the entire interval is then given by the binomial distribution

whose generating function is:

Taking the limit as n increases to infinity (with x fixed) and applying the product limit definition of the exponential function, this reduces to the generating function of the Poisson distribution:

General

- If and are independent, then the difference follows a Skellam distribution.

- If and are independent, then the distribution of conditional on is a binomial distribution. Specifically, if then More generally, if X1, X2, ..., Xn are independent Poisson random variables with parameters λ1, λ2, ..., λn then

- given it follows that In fact,

- If and the distribution of conditional on X = k is a binomial distribution, then the distribution of Y follows a Poisson distribution In fact, if, conditional on follows a multinomial distribution, then each follows an independent Poisson distribution

- The Poisson distribution is a special case of the discrete compound Poisson distribution (or stuttering Poisson distribution) with only a parameter.[32][33] The discrete compound Poisson distribution can be deduced from the limiting distribution of univariate multinomial distribution. It is also a special case of a compound Poisson distribution.

- For sufficiently large values of λ, (say λ>1000), the normal distribution with mean λ and variance λ (standard deviation ) is an excellent approximation to the Poisson distribution. If λ is greater than about 10, then the normal distribution is a good approximation if an appropriate continuity correction is performed, i.e., if P(X ≤ x), where x is a non-negative integer, is replaced by P(X ≤ x + 0.5).

- Variance-stabilizing transformation: If then[5] and[34]Under this transformation, the convergence to normality (as increases) is far faster than the untransformed variable.[بحاجة لمصدر] Other, slightly more complicated, variance stabilizing transformations are available,[5] one of which is Anscombe transform.[35] See Data transformation (statistics) for more general uses of transformations.

- If for every t > 0 the number of arrivals in the time interval قالب:Closed-closed follows the Poisson distribution with mean λt, then the sequence of inter-arrival times are independent and identically distributed exponential random variables having mean 1/λ.[36]

- The cumulative distribution functions of the Poisson and chi-squared distributions are related in the following ways:[5] and[5]

Poisson approximation

Assume where then[37] is multinomially distributed conditioned on

This means[26], among other things, that for any nonnegative function if is multinomially distributed, then

where

The factor of can be replaced by 2 if is further assumed to be monotonically increasing or decreasing.

Bivariate Poisson distribution

This distribution has been extended to the bivariate case.[38] The generating function for this distribution is

with

The marginal distributions are Poisson(θ1) and Poisson(θ2) and the correlation coefficient is limited to the range

A simple way to generate a bivariate Poisson distribution is to take three independent Poisson distributions with means and then set The probability function of the bivariate Poisson distribution is

Free Poisson distribution

The free Poisson distribution[39] with jump size and rate arises in free probability theory as the limit of repeated free convolution

as N → ∞.

In other words, let be random variables so that has value with probability and value 0 with the remaining probability. Assume also that the family are freely independent. Then the limit as of the law of is given by the Free Poisson law with parameters

This definition is analogous to one of the ways in which the classical Poisson distribution is obtained from a (classical) Poisson process.

The measure associated to the free Poisson law is given by[40]

where

and has support

This law also arises in random matrix theory as the Marchenko–Pastur law. Its free cumulants are equal to

Some transforms of this law

We give values of some important transforms of the free Poisson law; the computation can be found in e.g. in the book Lectures on the Combinatorics of Free Probability by A. Nica and R. Speicher[41]

The R-transform of the free Poisson law is given by

The Cauchy transform (which is the negative of the Stieltjes transformation) is given by

The S-transform is given by

in the case that

Weibull and stable count

Poisson's probability mass function can be expressed in a form similar to the product distribution of a Weibull distribution and a variant form of the stable count distribution. The variable can be regarded as inverse of Lévy's stability parameter in the stable count distribution:

where

is a standard stable count distribution of shape

and

is a standard Weibull distribution of shape

حساب ( p( k

يقام حساب هذه الكمية نتيجة عن العمل بتوزيع ثنائي ذا المعلمتين ( T ; λ/T ). إذا اعتبرنا T كبيرا، فيمكن تبيين أن التوزيع الثنائي نهايته في اللانهاية هو توزيع بواسون.

القيمة المتوقعة ، التباين و الانحراف المعياري

- القيمة المتوقعة لتوزيع بواسون هي λ

- تباين توزيع بواسون λ

- الانحراف المعياري لتوزيع بواسون هو

ميادين الاستعمال

غالبا ما استعمل توزيع بواسون لحساب أحداث النادرة كانتحار الأطفال، وصول البواخر إلى المرسى أو الحوادث الناتجة عن ركالات الأحصنة في العساكر (دراسة لاديسلاوس بورتكيفيكز)

أما منذ بعض عشرات السنين، امتد استعمال توزيع بواسون إلى ميادين أخرى. فهو يستعمل كثيرا الآن في تكنولوجيات الإتصال (حساب عدد المواصلات في مدّة معينة)، مراقبة الجودة الإحصائية، وصف بعض الظواهر التابعة لميدان التفكيك النووي المشع (تفكيك النواة المشعة يتبع دالة أسية ذات معملة تدعى أيضا λ) وعلم الأحياء و الرصد الجوي...

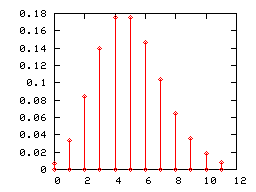

الرسوم البيانة ذات الأعمدة

ككل توزيع قائم على احتمال منفصل، يمكن تمثيل توزيع بواسون برسوم بيانية ذات أعمدة. هنا تحت، تمثل الرسوم البيانية توزيع بواسون ذا المعلمات 1 و 2 و 5.

رسم توزيع بواسون ذا العامل 5 بدأ يشبه بعض الشيء التوزيع الطبيعي (أو التوزيع الغاوسي) ذا القيمة المتوقعة 5 التباين 5. ولذلك إذا كانت λ أكبر من 5، نخير استعمال نموذج التوزيع الطبيعي.

بعض الخاصيات

إذا كانتا X و Y متغيران عشوائيان مستقلاّن يتبعان توزيع بواسون ،الأولى مع المعلمة λ والثانية المعلمة μ فإنّ X+Y متغير عشوائي يتبع توزيع بواسون ذا المعلمة λ+μ.

انظر أيضا إلى

- Continuum mechanics

- Solid mechanics

- Constitutive equation

- Strength of materials

- List of materials properties § Mechanical properties

- Representation theory of finite groups

- Voigt notation

- احتمال

- توزيع احتمالي

- توزيع ثنائي

- توزيع طبيعي

ملاحظات

المراجع

- ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةPoisson1837 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةdeMoivre1711 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةdeMoivre1718 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةdeMoivre1721 - ^ أ ب ت ث ج ح خ د خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةJohnson2005 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةStigler1982 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةHald1984 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةNewcomb1860 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةvonBortkiewitsch1898 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةYates2014 - ^ For the proof, see: Proof wiki: expectation and Proof wiki: variance

- ^ Kardar, Mehran (2007). Statistical Physics of Particles. Cambridge University Press. p. 42. ISBN 978-0-521-87342-0. OCLC 860391091.

- ^ Dekking, Frederik Michel; Kraaikamp, Cornelis; Lopuhaä, Hendrik Paul; Meester, Ludolf Erwin (2005). A Modern Introduction to Probability and Statistics. Springer Texts in Statistics (in الإنجليزية). p. 167. doi:10.1007/1-84628-168-7. ISBN 978-1-85233-896-1.

- ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةUgarte2016 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةHelske2017 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةChoi1994 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةRiordan1937 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةHaight1967 - ^ D. Ahle, Thomas (2022). "Sharp and simple bounds for the raw moments of the Binomial and Poisson distributions". Statistics & Probability Letters. 182: 109306. arXiv:2103.17027. doi:10.1016/j.spl.2021.109306.

- ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةLehmann1986 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةRaikov1937 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةvonMises1964 - ^ Harremoes, P. (July 2001). "Binomial and Poisson distributions as maximum entropy distributions". IEEE Transactions on Information Theory. 47 (5): 2039–2041. doi:10.1109/18.930936. S2CID 16171405.

- ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةLaha1979 - ^ Mitzenmacher, Michael (2017). Probability and computing: Randomization and probabilistic techniques in algorithms and data analysis. Eli Upfal (2nd ed.). Cambridge, UK. Exercise 5.14. ISBN 978-1-107-15488-9. OCLC 960841613.

{{cite book}}: CS1 maint: location missing publisher (link) - ^ أ ب خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةMitzenmacher2005 - ^ Short, Michael (2013). "Improved Inequalities for the Poisson and Binomial Distribution and Upper Tail Quantile Functions". ISRN Probability and Statistics. 2013. Corollary 6. doi:10.1155/2013/412958.

- ^ Short, Michael (2013). "Improved Inequalities for the Poisson and Binomial Distribution and Upper Tail Quantile Functions". ISRN Probability and Statistics. 2013. Theorem 2. doi:10.1155/2013/412958.

- ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةKamath2015 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةNIST2006 - ^ Feller, William. An Introduction to Probability Theory and its Applications.

- ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةZhang2013 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةZhang2016 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةMcCullagh1989 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةAnscombe1948 - ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةRoss2010 - ^ "1.7.7 – Relationship between the Multinomial and Poisson | STAT 504". Archived from the original on 6 August 2019. Retrieved 6 August 2019.

- ^ خطأ استشهاد: وسم

<ref>غير صحيح؛ لا نص تم توفيره للمراجع المسماةLoukas1986 - ^ Free Random Variables by D. Voiculescu, K. Dykema, A. Nica, CRM Monograph Series, American Mathematical Society, Providence RI, 1992

- ^ Alexandru Nica, Roland Speicher: Lectures on the Combinatorics of Free Probability. London Mathematical Society Lecture Note Series, Vol. 335, Cambridge University Press, 2006.

- ^ Lectures on the Combinatorics of Free Probability by A. Nica and R. Speicher, pp. 203–204, Cambridge Univ. Press 2006

ببليوجرافيا

- The Feynman Lectures on Physics - The tensor of elasticity

- Cowin, Stephen C. (1989). "Properties of the Anisotropic Elasticity Tensor". The Quarterly Journal of Mechanics and Applied Mathematics. 42 (2): 249–266. doi:10.1093/qjmam/42.2.249. eISSN 1464-3855. ISSN 0033-5614.

- Hehl, Friedrich W.; Itin, Yakov (2002). "The Cauchy Relations in Linear Elasticity Theory". Journal of Elasticity and the Physical Science of Solids. 66 (2): 185–192. arXiv:cond-mat/0206175. doi:10.1023/A:1021225230036. ISSN 0374-3535. S2CID 18618340.

- Hill, R. (April 1965). "Continuum micro-mechanics of elastoplastic polycrystals". Journal of the Mechanics and Physics of Solids. 13 (2): 89–101. Bibcode:1965JMPSo..13...89H. doi:10.1016/0022-5096(65)90023-2. ISSN 0022-5096.

- Itin, Yakov; Hehl, Friedrich W. (April 2013). "The constitutive tensor of linear elasticity: Its decompositions, Cauchy relations, null Lagrangians, and wave propagation". Journal of Mathematical Physics. 54 (4): 042903. arXiv:1208.1041. Bibcode:2013JMP....54d2903I. doi:10.1063/1.4801859. eISSN 1089-7658. ISSN 0022-2488. S2CID 119133966.

- Itin, Yakov (20 April 2020). "Irreducible matrix resolution for symmetry classes of elasticity tensors". Mathematics and Mechanics of Solids. 25 (10): 1873–1895. arXiv:1812.03367. doi:10.1177/1081286520913596. eISSN 1741-3028. ISSN 1081-2865. S2CID 219087296.

- Landau, Lev D.; Lifshitz, Evgeny M. (1970). Theory of Elasticity. Vol. 7 (2nd ed.). Pergamon Press. ISBN 978-0-08-006465-9.

- Marsden, Jerrold E.; Hughes, Thomas J. R. (1994). Mathematical Foundations of Elasticity. Dover Publications. ISBN 978-0-486-67865-8. OCLC 1117171567.

- Moakher, Maher; Norris, Andrew N. (5 October 2006). "The Closest Elastic Tensor of Arbitrary Symmetry to an Elasticity Tensor of Lower Symmetry" (PDF). Journal of Elasticity. 85 (3): 215–263. doi:10.1007/s10659-006-9082-0. eISSN 1573-2681. ISSN 0374-3535. S2CID 12816173.

- Norris, A. N. (22 May 2007). "Quadratic invariants of elastic moduli". The Quarterly Journal of Mechanics and Applied Mathematics. 60 (3): 367–389. arXiv:cond-mat/0612506. doi:10.1093/qjmam/hbm007. eISSN 1464-3855. ISSN 0033-5614.

- Olive, M.; Kolev, B.; Auffray, N. (2017-05-24). "A Minimal Integrity Basis for the Elasticity Tensor". Archive for Rational Mechanics and Analysis. Springer Science and Business Media LLC. 226 (1): 1–31. arXiv:1605.09561. Bibcode:2017ArRMA.226....1O. doi:10.1007/s00205-017-1127-y. ISSN 0003-9527. S2CID 253711197.

- Srinivasan, T.P.; Nigam, S.D. (1969). "Invariant Elastic Constants for Crystals". Journal of Mathematics and Mechanics. 19 (5): 411–420. eISSN 0095-9057. ISSN 1943-5274. JSTOR 24901866.

- Thomas, T. Y. (February 1966). "On the stress-strain relations for cubic crystals". Proceedings of the National Academy of Sciences. 55 (2): 235–239. Bibcode:1966PNAS...55..235T. doi:10.1073/pnas.55.2.235. eISSN 1091-6490. ISSN 0027-8424. PMC 224128. PMID 16591328.

- Thorne, Kip S.; Blandford, Roger D. (2017). Modern Classical Physics: Optics, Fluids, Plasmas, Elasticity, Relativity, and Statistical Physics. Princeton University Press. ISBN 9780691159027.